If you’re searching for smarter ways to improve your app’s performance, boost engagement, and increase conversions, you’re in the right place. Optimizing an app today isn’t just about design or features—it’s about understanding user behavior, leveraging data, and applying proven app A/B testing methods to make confident, results-driven decisions.

This article is designed to help you cut through the noise. We break down the latest app optimization techniques, emerging tech tools, and smart ecosystem strategies that actually move the needle. Whether you’re refining onboarding flows, testing feature releases, or improving retention metrics, you’ll find practical insights you can apply immediately.

Our guidance is built on in-depth analysis of real-world app performance trends, modern software frameworks, and current innovation alerts shaping the industry. Instead of theory, you’ll get clear explanations and actionable strategies—so you can optimize faster, reduce guesswork, and build an app experience users genuinely value.

Beyond Guesswork: A Framework for Data-Driven App Improvement

Too many app teams rely on “gut feel” (which is great for pizza toppings, less so for product strategy). Research from Google shows companies running controlled experiments grow revenue up to 2–3x faster than those that don’t (Harvard Business Review). The difference? SYSTEMS.

Start with app A/B testing methods to compare two versions of a feature against a single variable. Then expand into multivariate testing to isolate interaction effects. Complement this with:

• Usability testing to observe real user friction

• Performance testing to reduce load times (a 1-second delay can cut conversions by 7%, Akamai)

DATA beats guesswork—every time.

Establishing Your Testing Foundation: Goals, Metrics, and Tools

Before you run a single experiment, define what success actually means. Are you improving retention (the percentage of users who return), conversion rate (visitors who take a desired action), session duration, or task completion time? Clear goals prevent “vanity metrics” (numbers that look good but drive no impact).

Choosing KPIs That Matter

Every goal needs a measurable Key Performance Indicator (KPI). If you launch a new onboarding flow, track the percentage of users who complete it and the drop-off rate at each step. Specific metrics turn opinions into evidence.

- Pro tip: Limit primary KPIs to one or two per test to avoid diluted insights.

Building the Essential Tech Stack

A strong ecosystem combines analytics platforms like Amplitude or Mixpanel for behavioral insights, A/B frameworks such as Firebase or Optimizely to run app A/B testing methods, and performance monitoring tools to track crashes and load times. Each layer adds clarity—and clarity drives smarter optimization decisions.

A/B Testing: The Cornerstone of Iterative Design

What Is A/B Testing?

A/B testing is a method of splitting user traffic between two versions of the same experience: Version A (control) and Version B (variant). Each group sees a different version, and you measure which one performs better against a specific KPI (Key Performance Indicator, such as sign-ups or purchases). In short, you’re letting real user behavior—not opinions—decide.

For example, if 1,000 users visit your app, 500 might see the original sign-up screen and 500 see a revised version. If Version B generates more completed registrations, you have evidence to support the change.

Formulating a Strong Hypothesis

A good hypothesis is specific and measurable. Structure it like this:

Changing X will increase Y by Z% because of reason R.

Example: “Changing the CTA button from blue to green will increase sign-ups by 10% because green is associated with ‘Go.’” Now you’re not guessing—you’re testing a clear prediction.

Practical Examples for A/B Tests

You can apply app A/B testing methods to:

- UI Elements: Button colors, headline copy, icon design.

- User Flows: Onboarding steps, checkout processes, feature discovery paths.

- Messaging: Push notification wording, in-app pop-ups.

For deeper optimization strategies, review these proven techniques to improve mobile app performance.

Analyzing Results

However, results require careful interpretation. Statistical significance means the outcome is unlikely due to chance (commonly measured at 95% confidence, per standard experimentation practices). Avoid ending tests too early—small sample sizes can mislead. Also, don’t ignore qualitative feedback. Numbers tell you what happened; user comments often explain why. (And yes, sometimes the “losing” version teaches you more.)

Advanced Methods: Multivariate and Usability Testing

Going Deeper with Multivariate Testing (MVT)

Multivariate Testing (MVT) is an advanced experimentation method that tests multiple elements on a single screen at the same time—such as a headline, hero image, and button color—to determine which combination performs best. Unlike traditional A/B tests (which compare Version A vs. Version B), MVT analyzes interaction effects between variables. For example, a red button may outperform a blue one—but only when paired with a benefit-driven headline.

Research from Google shows that controlled experiments can improve conversion rates by 10–30% when element interactions are optimized (Google Optimize case studies). That lift often comes from combinations, not single tweaks.

When to Use MVT

MVT requires high traffic because multiple variations split your audience into smaller segments. If your app sees thousands of daily users, MVT can accelerate insight. Low-traffic apps, however, may struggle to reach statistical significance (a mathematically reliable result).

The Human Element: Usability Testing

Data shows what users do. Usability testing reveals why. In moderated sessions, facilitators observe users completing tasks in real time. Unmoderated tests collect recordings at scale. Nielsen Norman Group reports that testing with just five users can uncover up to 85% of usability issues.

Use findings from usability sessions to refine hypotheses for future app A/B testing methods. Quantitative patterns plus qualitative insight? That’s where optimization gets real traction.

Optimizing for Speed and Stability: Performance Testing

Performance isn’t just a technical metric—it’s a UX feature. Users may not articulate it this way, but they feel it. A slow launch time, battery drain, or random crash subtly erodes trust (and patience). Research from Google shows that 53% of mobile users abandon a site if it takes longer than three seconds to load. Apps are no different. Speed and stability directly influence retention.

So, what should you focus on?

First, prioritize load testing. This evaluates how your app performs under heavy traffic. If a marketing push succeeds, can your infrastructure handle the spike? Next, conduct stress testing to intentionally push the app past its limits. Knowing the breaking point helps you reinforce weak areas before users find them. Then, simulate real-world friction with network condition testing. Not everyone has perfect Wi‑Fi—test under slow or unstable cellular conditions to ensure resilience.

Key Metrics That Actually Matter

Track app launch time, screen rendering speed, API response times, and crash rates. However, don’t just collect data—act on it. Use app A/B testing methods to compare performance optimizations before full rollout.

Pro tip: Set performance budgets early (for example, a two‑second launch cap) and treat overruns as seriously as broken features. Fast, stable apps win—every time.

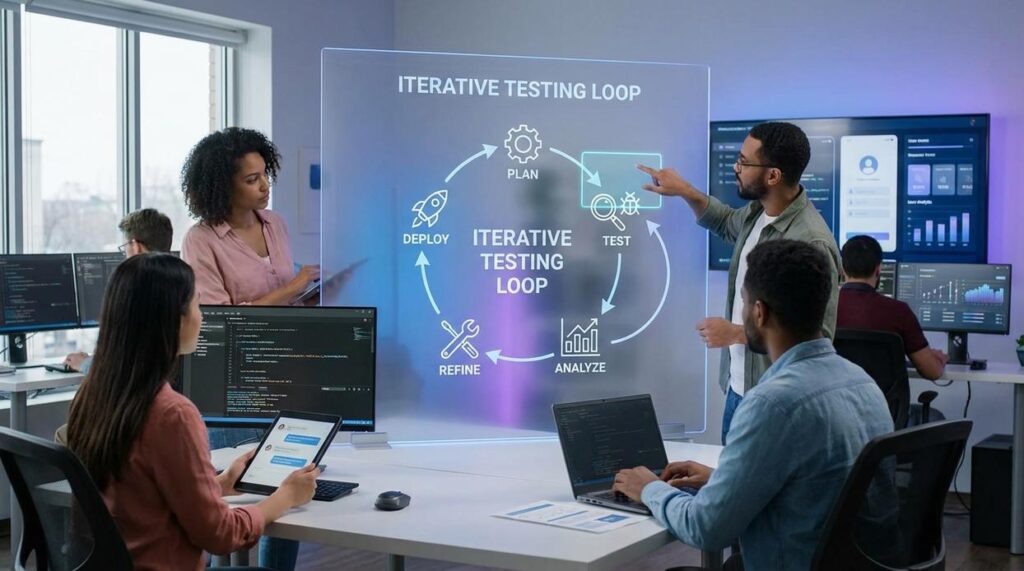

Implementing a continuous improvement cycle means treating every release as a learning opportunity. This article equipped you with a practical toolkit to refine UX and performance without guesswork. The real shift is simple: stop shipping features blindly and validate changes with real user data.

Start small. Identify one metric—conversion rate, retention, load time—and define what success looks like. Then design a clear hypothesis and test it using app A/B testing methods. Track results, document insights, and iterate.

Some teams worry this slows development. In reality, structured experimentation prevents costly missteps. Build the loop, review weekly, and improve with confidence consistently.

Turn Insight Into Smarter App Growth

You came here looking for a clearer, more practical way to optimize your app and drive meaningful growth. Now you understand how smarter experimentation, data-backed decisions, and app A/B testing methods work together to eliminate guesswork and improve performance.

The real pain point isn’t a lack of ideas — it’s not knowing which changes will actually move the needle. Without structured testing and reliable optimization frameworks, growth stalls, engagement drops, and user acquisition costs climb.

The good news? You now have a roadmap. By applying structured app A/B testing methods, refining user flows, and leveraging intelligent app ecosystems, you can turn raw traffic into loyal, high-retention users.

If you’re ready to stop guessing and start scaling, explore the latest optimization tools and innovation alerts designed to simplify testing and accelerate results. Join thousands of forward-thinking app builders who rely on proven frameworks and real-time tech insights to stay ahead.

Your users expect better experiences. Start optimizing smarter today.